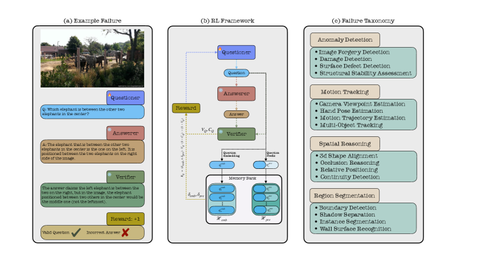

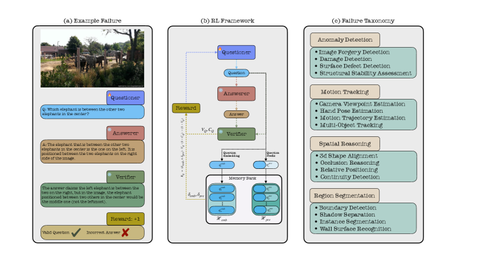

An RL framework that trains a questioner agent to generate increasingly complex queries that automatically expose weaknesses in vision-language models without human intervention.

I am a Ph.D. candidate at Mila, co-advised by

Prof. Sarath Chandar and

Prof. Ross Goroshin.

My research interests are continual learning, lifelong learning,

and streaming reinforcement learning.

Previously, I completed my Master's at IIIT Hyderabad,

co-advised by Prof. Vineet Gandhi and

Prof. K. Madhava Krishna,

working on visual grounding, language-guided navigation, and multi-view perception.

An RL framework that trains a questioner agent to generate increasingly complex queries that automatically expose weaknesses in vision-language models without human intervention.

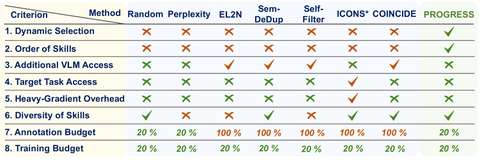

A data-efficient training framework for VLMs that dynamically selects samples based on the model's learning propensity.

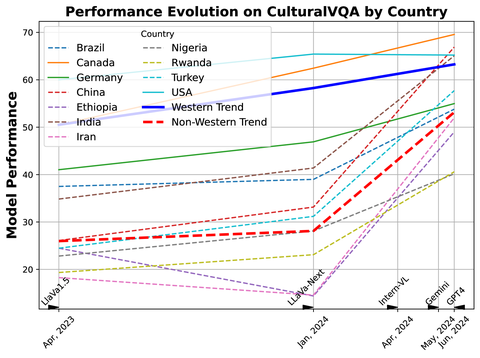

CulturalVQA: a benchmark for evaluating VLMs' understanding of geo-diverse cultural elements, revealing performance disparities across regions and facets.

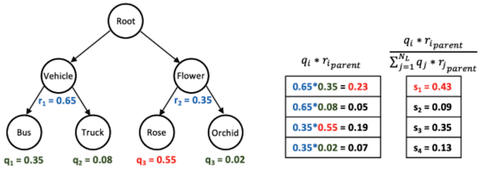

Hierarchical Ensembles (HiE) — a post-hoc strategy that leverages label hierarchy to reduce mistake severity in fine-grained classification at test time.

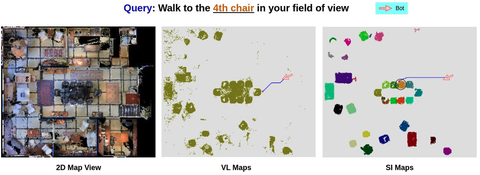

An instance-focused scene representation enabling language-based navigation that grounds references to specific instances within an environment.

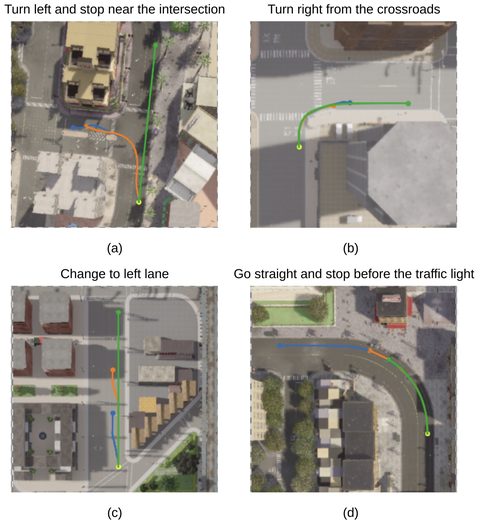

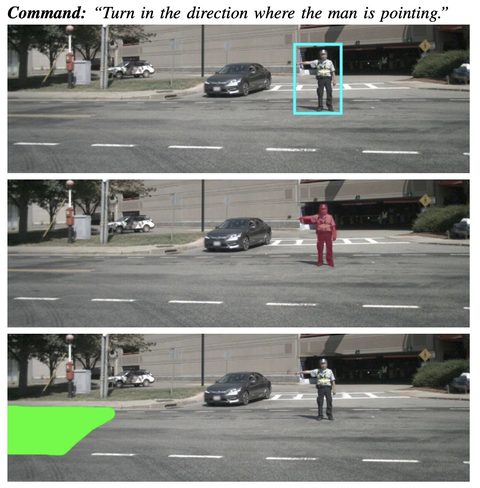

Vision-and-Language Navigation for outdoor autonomous driving — explicitly grounds navigable regions from text and uses them as guidance for the navigation stack.

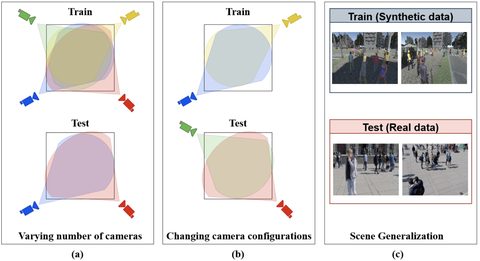

Existing multi-view detectors overfit to single scenes — we formalize three critical forms of generalization and propose evaluations for them.

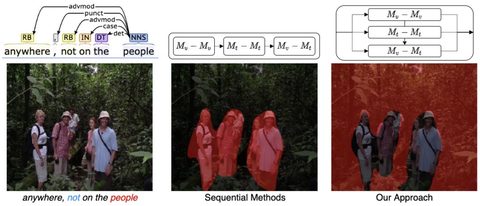

A Transformer-based architecture capturing all forms of multi-modal interaction synchronously for referring image segmentation.

A visual-grounding-based approach to language-guided navigation that brings interpretability to Vision-Language Navigation.